swXtch AI Router connects live media directly to all of the world’s AI models - without breaking timing, reliability, or scale.

Moderate Content

Detect inappropriate or harmful content

Add Captions

Generate automatic subtitles

Detect Objects

Identify and track objects in video

Create Highlights

Generate highlight reels from key moments

Translate Audio

Convert speech to different languages

Describe Video

Generate AI descriptions of video content

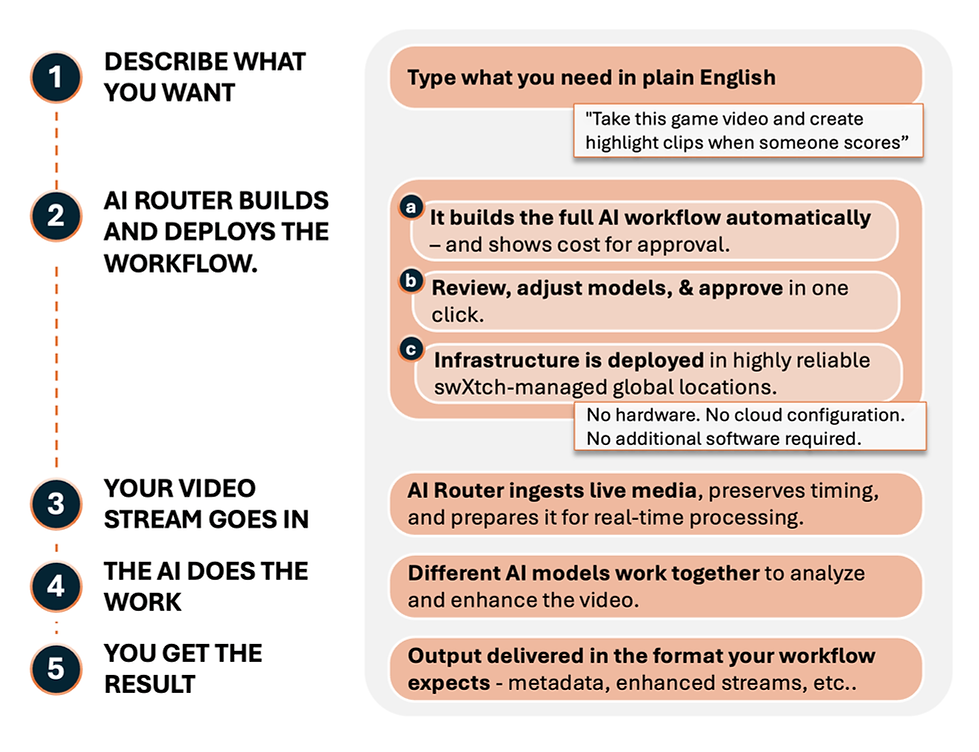

Connect Live Video to AI - Without the Infrastructure Headaches

You don't need expertise in AI or deep networking because our platform runs entirely with natural language commands.

Connect any live media to any AI model in minutes, not months

Discover, compare, and test models quickly

Natural Language Interface

Users interact with the platform using everyday language

One Credit

System

Transport and inference covered together

One deterministic media fabric to Ingest broadcast video, preserve timing, distribute at scale, and run inference anywhere.

See it in action below.

All the complex technical stuff is hidden - you just see the results.

-

Shorten Experimentation

-

Reduce Engineering Burden

-

Accelerate Time-to-Production

Benefits

Route broadcast-quality live video to AI inference across cloud, edge, and on-prem environments with time sync, multicast transport, and normalized model integration.

Infrastructure Gap in Live Media AI

Live Media AI Pipelines Are Hard to Implement

-

Ingest fails because broadcast standards and time sync are not natively supported.

-

Transport breaks under multi-Gb live streams.

-

Deterministic live transport does not exist in standard AI infrastructure.

-

No common live interface shared with AI models.

-

Scale multiplies egress cost and complexity without end-to-end visibility.

How AI Router Solves It

End-to-End AI Media Connectivity Platform

-

Broadcast-native ingest, with support for standards and time sync.

-

Transport remains deterministic under multi-Gb live streams.

-

A standardized live interface connects video to any AI model.

-

Outputs are normalized across model classes.

-

Scale is controlled with multicast fanout and full pipeline observability.

Production Infrastructure for Live AI

-

Universal ingest of broadcast standards including SMPTE ST 2110, SRT, NDI

-

Multicast-enabled high-bandwidth transport for parallel AI processing

-

Standardized integration layer for any AI model

-

Normalized outputs across model classes

-

Customer-accessible monitoring across the entire pipeline