A Virtual Broadcast Engineer for AI-Powered Media Workflows that Integrates Microsoft Fabric

- Apr 16

- 2 min read

Updated: Apr 18

AI is moving from experimentation to essential infrastructure in live media. As content volumes grow and production timelines compress, broadcasters need AI that works inside their existing workflows, not alongside them. The challenge isn’t access to AI models. It’s deploying and operating them at production scale without requiring specialized AI or networking expertise.

At NAB 2026, swXtch.io is demonstrating a different approach.

Introducing the AI Router

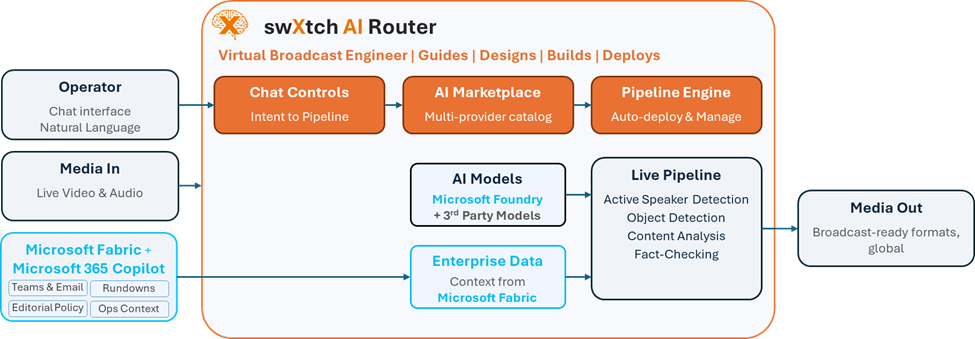

The swXtch AI Router is a virtual broadcast engineer that teaches operators what AI can do in their workflow, guides them through design decisions, and builds production-ready pipelines.

Using a natural language prompt, operators can:

• Ingest audio and video from on-prem or cloud environments

• Access AI models from any provider, regardless of where they run

• Incorporate proprietary or on-prem AI models

• Apply processing such as object detection, speaker detection, or content analysis

• Deliver outputs globally in formats that fit existing workflows

The platform automatically designs, deploys, and manages the full pipeline end-to-end.

An Open Marketplace for AI Models

At the center of the platform is an AI Marketplace, which provides a curated catalog of video and audio inference models across providers, with off-the-shelf integrations ready to plug into live workflows. Through integration with Microsoft Foundry, backed by Azure, the AI Router can access models via Foundry Models and run them on Azure GPU resources. Operators can test and switch between multiple models within the same pipeline, compare performance in real time, and optimize for accuracy, latency, or cost.

Integrating with Microsoft Fabric, Microsoft 365 Copilot, and Azure

The AI Router integrates with Microsoft Fabric and Microsoft 365 Copilot to bring enterprise data directly into live media workflows. Through this integration, operators can incorporate Microsoft Teams communications, email, and operational context alongside live video and audio to enable context-aware pipelines that support real-time decision-making. Azure provides the underlying compute, with the AI Router leveraging Azure GPU resources to run inference workloads at scale.

swXtch AI Router - Integrated with Microsoft Fabric

This means workflows aren’t limited to processing media in isolation. They can draw on business intelligence to inform what gets analyzed, prioritized, or distributed. For example:

Production context from Teams or rundowns can determine which feeds or segments to prioritize

Internal data can guide which content is analyzed, clipped, or distributed

Operators can use Copilot to trigger or modify pipelines based on live production needs

Corporate communications and editorial policies can be referenced by AI models in real time. For example, live fact checking against editorial standards

From Complexity to Simplicity

The AI Router removes the hardest parts of deploying AI in live production - infrastructure design, model integration, and pipeline orchestration. Instead of requiring dedicated teams with specialized expertise, the platform acts as a virtual broadcast engineer: operators describe what they need, and the platform teaches, guides, and builds the solution.

AI becomes something operators use directly, without managing the underlying systems.

See It at NAB 2026

swXtch.io will demonstrate the AI Router within the Microsoft booth (West Hall, Booth W1731) at NAB Show 2026, as well as in private meetings for deeper technical discussions.

If you’re exploring how to bring AI into live media workflows without increasing complexity, we’d welcome the opportunity to connect.

For a demo, please contact the swXtch team at info@swxtch.io.